Property | SQL warehouse1 | All-purpose cluster2 | Job cluster3 |

|---|---|---|---|

Source Type - Single Object | Yes | Yes | Yes |

Source Type - Parameter | Yes | Yes | Yes |

Source Type - Query | Yes | No | No |

Source Type - Multiple Objects | Yes | No | No |

Parameter | Yes | No | Yes |

Filter | Yes | No | Yes |

Sort | Yes | No | No |

Select distinct rows only | No | No | No |

Database Name | Yes | No | Yes |

Table Name | Yes | No | Yes |

Pre SQL | Yes | No | No |

Post SQL | Yes | No | No |

SQL Override | Yes | No | No |

Staging Location | Yes | No | Yes |

Job Timeout | No | No | Yes |

Job Status Poll Interval | No | No | Yes |

DB REST API Timeout | No | No | Yes |

DB REST API Retry Interval | No | No | Yes |

Tracing Level | Yes | No | Yes |

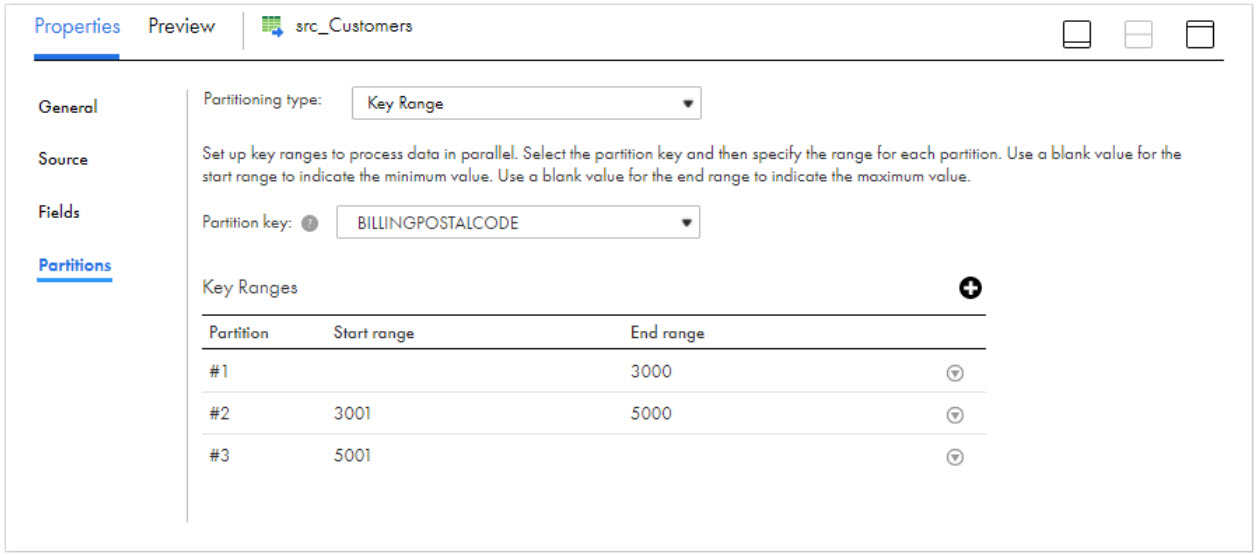

Key Range Partitioning | Yes | No | No |

1The Secure Agent connects to the SQL warehouse at design time and runtime. 2The Secure Agent connects to the all-purpose cluster to import the metadata at design time. 3The Secure Agent connects to the job cluster to run the mappings. | |||

Property | Description |

|---|---|

Connection | Name of the source connection. Select a source connection or click New Parameter to define a new parameter for the source connection. Note: You can completely paramaterize a parameter file for a source connection only for a single object source type. Parameterization doesn't apply to mappings in advanced mode. |

Source Type | Type of the source object. Select any of the following source objects:

Multiple objects and query source types don't apply to all-purpose cluster and job cluster. Note: Multi-object database override will override the database for all imported objects, while the table override will only override the first table of the multi-object source. |

Object | Name of the source object. You cannot use the data preview option if the source fields contain hierarchical data types. |

Query1 | Click on Define Query and enter a valid custom query. The Query property appears only if you select Query as the source type. You can parameterize a custom query object at runtime in a mapping. You can also enable unity catalog settings in a custom query to access a table within a particular catalog. |

1Doesn't apply to mappings in advanced mode. | |

Property | Description |

|---|---|

Query Options | Filters the source data based on the conditions you specify. Click Configure to configure a filter option. The Filter option filters records and reduces the number of rows that the Secure Agent reads from the source. Add conditions in a read operation to filter records from the source. You can specify the following filter conditions:

Note: You can use Contains, Ends With, and Starts With operators to filter records only on SQL endpoints. |

Filter | Filters records based on the filter condition. You can specify a simple filter or an advanced filter. |

Sort1 | Sorts records based on the conditions you specify. You can specify the following sort conditions:

You cannot sort data when you select the Query source type. You cannot use a parameter file to parameterize the sort condition. You cannot sort partitioned data. |

1Doesn't apply to mappings in advanced mode. | |

Property | Description |

|---|---|

Database Name | Overrides the database name provided in connection and the database name provided during metadata import. Note: To read from multiple objects ensure that you have specified the database name in the connection properties. |

Table Name | Overrides the table name used in the metadata import with the table name that you specify. |

Pre SQL | The pre-SQL command to run on the Databricks source table before the agent reads the data. For example, if you want to update records in the database before you read the records from the table, specify a pre-SQL statement. The query must include a fully qualified table name. You can specify multiple pre-SQL commands, each separated with a semicolon. |

Post SQL | The post-SQL command to run on the Databricks table after the agent completes the read operation. For example, if you want to delete some records after the latest records are loaded, specify a post-SQL statement. The query must include a fully qualified table name. You can specify multiple post-SQL commands, each separated with a semicolon. |

SQL Override | Overrides the default SQL query used to read data from Databricks custom query source. The column names in the SQL override query should match with the column names in the custom query in a SQL transformation. Note: The metadata of the source should be the same as SQL override to override the query. |

Staging Location | Relative directory path to store the staging files.

Note: When you use the unity catalog, a pre-existing location on user's cloud storage must be provided in the Staging Location. |

Job Timeout | Maximum time in seconds that is taken by the Spark job to complete processing. If the job is not completed within the time specified, the Databricks cluster terminates the job and the mapping fails. If the job timeout is not specified, the mapping shows success or failure based on the job completion. |

Job Status Poll Interval | Poll interval in seconds at which the Secure Agent checks the status of the job completion. Default is 30 seconds. |

DB REST API Timeout | The Maximum time in seconds for which the Secure Agent retries the REST API calls to Databricks when there is an error due to network connection or if the REST endpoint returns 5xx HTTP error code. Default is 10 minutes. |

DB REST API Retry Interval | The time Interval in seconds at which the Secure Agent must retry the REST API call, when there is an error due to network connection or when the REST endpoint returns 5xx HTTP error code. This value does not apply to the Job status REST API. Use job status poll interval value for the Job status REST API. Default is 30 seconds. |

Tracing Level | Sets the amount of detail that appears in the log file. You can choose terse, normal, verbose initialization, or verbose data. Default is normal. |