HDFS Mappings

Create an HDFS mapping to read or write to HDFS.

You can read and write fixed-width and delimited file formats. You can read or write compressed files. You can read text files and binary file formats such as sequence file from HDFS. You can specify the compression format of the files. You can use the binary stream output of the complex file data object as input to a Data Processor transformation to parse the file.

You can define the following objects in an HDFS mapping:

- •Flat file data object or complex file data object operation as the source to read data from HDFS.

- •Transformations.

- •Flat file data object as the target to write data to HDFS or any target.

Validate and run the mapping. You can deploy the mapping and run it or add the mapping to a Mapping task in a workflow.

HDFS Data Extraction Mapping Example

Your organization needs to analyze purchase order details such as customer ID, item codes, and item quantity. The purchase order details are stored in a semi-structured compressed XML file in HDFS. The hierarchical data includes a purchase order parent hierarchy level and a customer contact details child hierarchy level. Create a mapping that reads all the purchase records from the file in HDFS. The mapping must convert the hierarchical data to relational data and write it to a relational target.

You can use the extracted data for business analytics.

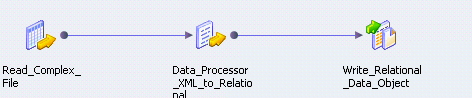

The following figure shows the example mapping:

You can use the following objects in the HDFS mapping:

- HDFS Input

- The input object, Read_Complex_File, is a Read transformation that represents a compressed XML file stored in HDFS.

- Data Processor Transformation

- The Data Processor transformation, Data_Processor_XML_to_Relational, parses the XML file and provides a relational output.

- Relational Output

- The output object, Write_Relational_Data_Object, is a Write transformation that represents a table in an Oracle database.

When you run the mapping, the Data Integration Service reads the file in a binary stream and passes it to the Data Processor transformation. The Data Processor transformation parses the specified file and provides a relational output. The output is written to the relational target.

You can configure the mapping to run in a native or Hadoop run-time environment.

Complete the following tasks to configure the mapping:

- 1. Create an HDFS connection to read files from the Hadoop cluster.

- 2. Create a complex file data object read operation. Specify the following parameters:

- - The file as the resource in the data object.

- - The file compression format.

- - The HDFS file location.

- 3. Optionally, you can specify the input format that the Mapper uses to read the file.

- 4. Drag and drop the complex file data object read operation into a mapping.

- 5. Create a Data Processor transformation. Configure the following properties in the Data Processor transformation:

- - An input port set to buffer input and binary data type.

- - Relational output ports depending on the number of columns you want in the relational output. Specify the port size for the ports. Use an XML schema reference that describes the XML hierarchy. Specify the normalized output that you want. For example, you can specify PurchaseOrderNumber_Key as a generated key that relates the Purchase Orders output group to a Customer Details group.

- - Create a Streamer object and specify Streamer as a startup component.

- 6. Create a relational connection to an Oracle database.

- 7. Import a relational data object.

- 8. Create a write transformation for the relational data object and add it to the mapping.